VideoReTalking alternatives

Open-source talking-head editing stack for re-syncing, re-voicing, and expression-aware face video edits.

This VideoReTalking alternatives guide compares pricing, strengths, tradeoffs, and related options.

VideoReTalking is a stronger fit than plain lip-sync tools when you already have a face video and need to re-time mouth motion, swap audio, or steer expressions while keeping a realistic talking-head output. It is more edit-oriented than LatentSync, which makes it useful in restoration and re-voice pipelines.

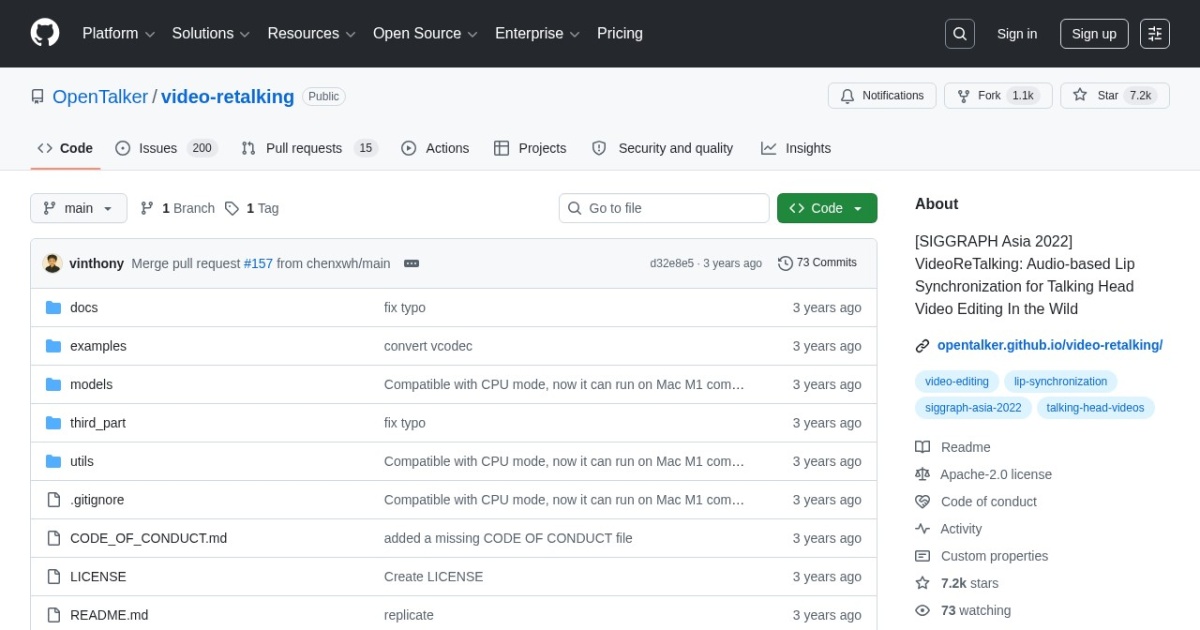

Official site: https://github.com/OpenTalker/video-retalking

Company YouTube: No official company YouTube channel found during official-page review.

At a glance

| Pricing model | Free |

|---|---|

| Page type | Open-source project |

| Model source | 3rd-party models |

| Price range | Free (open-source) |

| Best for | Talking-head repair and re-sync for existing video |

| Categories | For Creators , Video , Virtual Avatars , Free AI Tools , Local LLMs |

Top alternatives

- LatentSync : Open-source lip-sync framework for generating talking portrait videos from audio and face inputs.

- Wav2Lip : Open-source lip-sync model for syncing speech to an existing face video or portrait clip.

- Hallo : Open-source portrait animation model for higher-fidelity talking-head generation from one image and driving audio.

- LivePortrait : Open-source local portrait animation tool that turns a single image into a talking video.

- MuseTalk : Open-source real-time lip-sync framework for talking avatar and portrait video workflows.

- D-ID : AI avatar and talking-head video platform for explainers, campaigns, and influencer-style content.

Notes

VideoReTalking is the practical open-source choice when you want to edit an existing talking-head clip rather than generate everything from scratch. For a full workflow, see the virtual talking avatars tutorial.

Comparison table

| Tool | Pricing | Page type | Model source | Price range | Pros | Cons |

|---|---|---|---|---|---|---|

| VideoReTalking | Free | Open-source project | 3rd-party models | Free (open-source) | Better fit for editing existing talking-head footage than single-image avatar tools; Apache-2.0 is cleaner for commercial evaluation than many research-only releases | More moving parts than simpler lip-sync scripts; Setup is still technical compared with hosted avatar products |

| LatentSync | Free | Open-source project | 3rd-party models | Free (open-source) | Free local workflow with no per-render subscription fee; Useful baseline for talking portrait generation | Technical installation compared with hosted tools; Generation quality can be inconsistent across inputs |

| Wav2Lip | Free | Open-source project | 3rd-party models | Free (open-source) | Strong baseline lip-sync quality for an older open model; Works on existing face videos rather than only single-image animation | Open release is older and less polished than newer avatar stacks; License posture is less friendly for commercial productization than Apache or MIT options |

| Hallo | Free | Open-source project | 3rd-party models | Free (open-source) | Stronger portrait-animation quality target than basic lip-sync baselines; MIT license is relatively simple for commercial review | Heavier runtime and setup requirements than smaller lip-sync tools; Input prep is stricter than quick hosted avatar tools |

| LivePortrait | Free | Open-source project | 3rd-party models | Free (open-source) | Free to use with local execution; Good control for image-to-video avatar experiments | Setup and dependency management can be technical; Quality varies with source image and driving signal |

| MuseTalk | Free | Open-source project | 3rd-party models | Free (open-source) | Free local workflow with no per-render subscription fee; Useful baseline for talking portrait generation | Technical installation compared with hosted tools; Generation quality can be inconsistent across inputs |

| D-ID | Subscription | Product/service | Own models | $5.90-$195.99+/mo | Fast avatar video creation from script or audio; Useful for campaign and explainer workflows | Visual realism and lip-sync quality can vary by scenario; Brand-safe output still needs manual QA |

Internal links

Related best pages

- Best AI Voiceover Tools

- Best AI Tools for YouTube Shorts

- Best AI Video Repurposing Tools

- Best AI Thumbnail Generators