Wav2Lip alternatives

Open-source lip-sync model for syncing speech to an existing face video or portrait clip.

This Wav2Lip alternatives guide compares pricing, strengths, tradeoffs, and related options.

Wav2Lip is still one of the baseline open-source references for audio-to-lip synchronization. It is older than LatentSync and newer diffusion-based portrait stacks, but it remains useful when you want straightforward lip-sync on existing face footage rather than a broader talking-avatar studio.

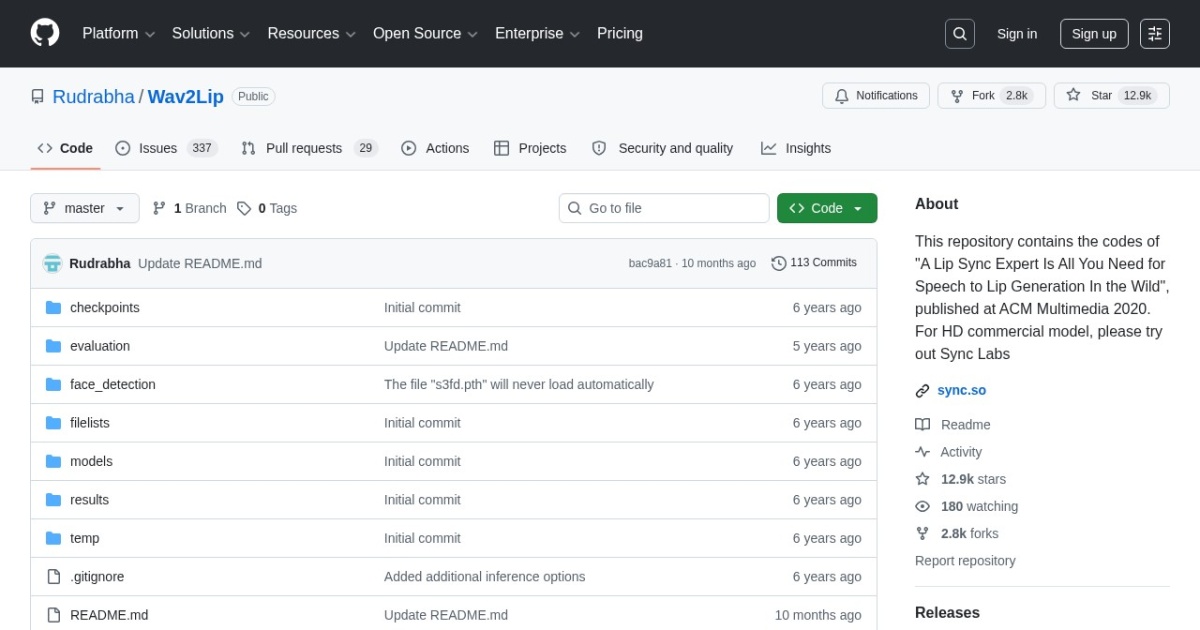

Official site: https://github.com/Rudrabha/Wav2Lip

Company YouTube: No official company YouTube channel found during official-page review.

At a glance

| Pricing model | Free |

|---|---|

| Page type | Open-source project |

| Model source | 3rd-party models |

| Price range | Free (open-source) |

| Best for | Fast local lip-sync for recorded face video |

| Categories | For Creators , Video , Virtual Avatars , Free AI Tools , Local LLMs |

Top alternatives

- LatentSync : Open-source lip-sync framework for generating talking portrait videos from audio and face inputs.

- VideoReTalking : Open-source talking-head editing stack for re-syncing, re-voicing, and expression-aware face video edits.

- Hallo : Open-source portrait animation model for higher-fidelity talking-head generation from one image and driving audio.

- EchoMimic : Open-source audio-driven portrait animation framework with editable landmark control and newer multimodal animation branches.

- MuseTalk : Open-source real-time lip-sync framework for talking avatar and portrait video workflows.

- HeyGen : Avatar and talking-head video generator for quick production.

Notes

Wav2Lip is still worth keeping in the catalog because many lip-sync workflows are judged against it even when teams eventually move to newer models. For a full workflow, see the virtual talking avatars tutorial.

Comparison table

| Tool | Pricing | Page type | Model source | Price range | Pros | Cons |

|---|---|---|---|---|---|---|

| Wav2Lip | Free | Open-source project | 3rd-party models | Free (open-source) | Strong baseline lip-sync quality for an older open model; Works on existing face videos rather than only single-image animation | Open release is older and less polished than newer avatar stacks; License posture is less friendly for commercial productization than Apache or MIT options |

| LatentSync | Free | Open-source project | 3rd-party models | Free (open-source) | Free local workflow with no per-render subscription fee; Useful baseline for talking portrait generation | Technical installation compared with hosted tools; Generation quality can be inconsistent across inputs |

| VideoReTalking | Free | Open-source project | 3rd-party models | Free (open-source) | Better fit for editing existing talking-head footage than single-image avatar tools; Apache-2.0 is cleaner for commercial evaluation than many research-only releases | More moving parts than simpler lip-sync scripts; Setup is still technical compared with hosted avatar products |

| Hallo | Free | Open-source project | 3rd-party models | Free (open-source) | Stronger portrait-animation quality target than basic lip-sync baselines; MIT license is relatively simple for commercial review | Heavier runtime and setup requirements than smaller lip-sync tools; Input prep is stricter than quick hosted avatar tools |

| EchoMimic | Free | Open-source project | 3rd-party models | Free (open-source) | More controllable portrait animation than simple mouth-sync baselines; Apache-2.0 is easier to review than restrictive research-only terms | More experimental workflow than mainstream hosted avatar tools; Hardware needs can be substantial for comfortable iteration |

| MuseTalk | Free | Open-source project | 3rd-party models | Free (open-source) | Free local workflow with no per-render subscription fee; Useful baseline for talking portrait generation | Technical installation compared with hosted tools; Generation quality can be inconsistent across inputs |

| HeyGen | Subscription | Product/service | Own models | $29-$299+/mo | Fast setup for solo teams; Useful template support for repeatable workflows | Costs can increase with higher usage; Output quality depends on prompt quality |

Internal links

Related best pages

- Best AI Voiceover Tools

- Best AI Tools for YouTube Shorts

- Best AI Video Repurposing Tools

- Best AI Thumbnail Generators