Qwen2.5 Coder alternatives

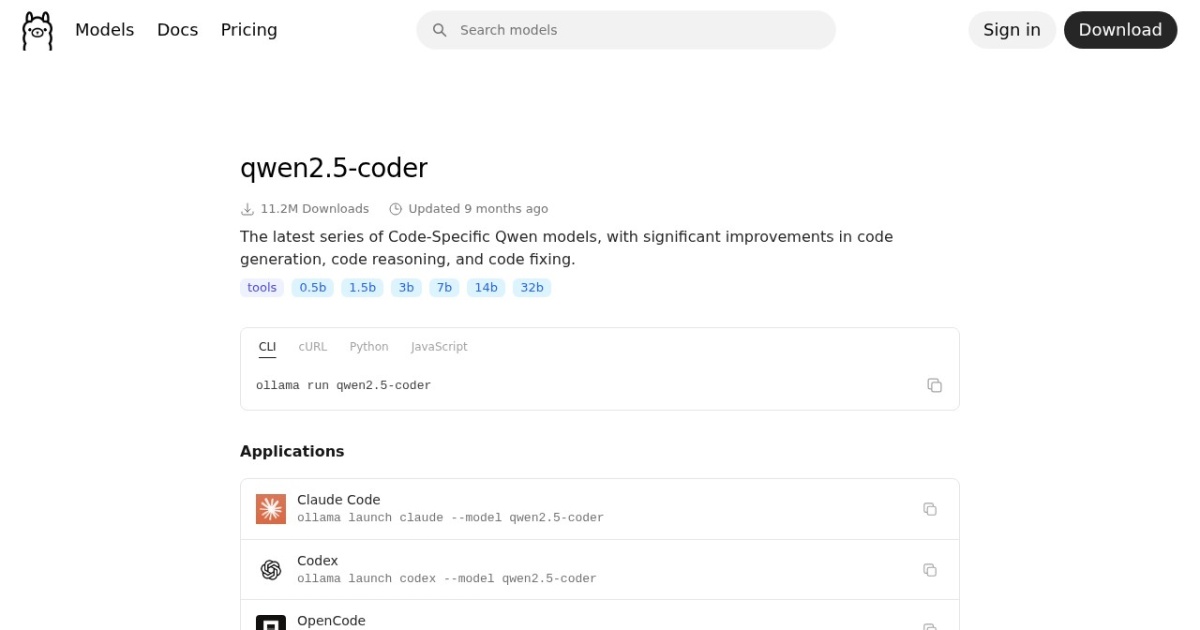

Code-focused Qwen model family tuned for programming, debugging, and refactoring workflows.

This Qwen2.5 Coder alternatives guide compares pricing, strengths, tradeoffs, and related options.

Qwen2.5 Coder is a practical local coding model line that balances quality and size options for developer-heavy workloads.

Official site: https://ollama.com/library/qwen2.5-coder

Company YouTube: No official company YouTube channel found during official-page review.

At a glance

| Pricing model | Free |

|---|---|

| Page type | Model family |

| Model source | Own models |

| Price range | Free (open weights) |

| Model last update | 2025-05-22 (Ollama library "Updated 9 months ago", inferred from retrieval date). |

| Model weight counts | 0.5B, 1.5B, 3B, 7B, 14B, 32B |

| Model versions | Qwen2.5-Coder release, Ollama library refresh |

| Best for | Local coding and debugging support, Refactoring and code review assistance, Self-hosted development agent workflows |

| Categories | For Solopreneurs , For Small Business , Free AI Tools , Developers |

Model version timeline

Top alternatives

- GLM-4.7-Flash : Lightweight GLM 4.7 branch focused on fast coding, reasoning, and long-context generation.

- Phi-3 Mini : Lightweight Phi model family for fast local inference on modest hardware.

- DeepSeek-R1 : Reasoning-focused open-weight family with MIT core licensing and smaller distilled options.

- Goose : Open-source local engineering agent for code edits, terminal tasks, and tool-driven workflows.

Notes

Qwen2.5 Coder is one of the most practical local coding-focused model families for Ollama users.

Comparison table

| Tool | Pricing | Page type | Model source | Price range | API cost | Subscription cost | Pros | Cons |

|---|---|---|---|---|---|---|---|---|

| Qwen2.5 Coder | Free | Model family | Own models | Free (open weights) | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong coding-oriented instruction following; Multiple size choices for different VRAM budgets | Larger variants may spill on lower-VRAM cards; Requires disciplined prompt + test loops for reliability |

| GLM-4.7-Flash | Free | Model family | Own models | Free (open weights) | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong coding and reasoning performance for its deployment class; Better speed/efficiency profile than large flagship stacks | Output quality still needs prompt discipline and QA; Tooling/runtime support can lag right after new releases |

| Phi-3 Mini | Free | Model family | Own models | Free (open weights) | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Fast on lower-end local hardware; Lower VRAM pressure than larger model families | Lower ceiling on complex reasoning tasks; Can underperform larger models on nuanced prompts |

| DeepSeek-R1 | Free | Model family | Own models | Free (open weights) | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | MIT core licensing is commercially friendly; Strong reasoning orientation for analytical tasks | Flagship model sizes are impractical for most solo local setups; Distill licensing can vary based on upstream model lineage |

| Goose | Free | Open-source project | 3rd-party models | Free (open-source) | No separate public API pricing is listed; access appears tied to the provider's plans or hosted usage. | Subscription cost follows the listed plan range above. | Open-source local-first workflow with good repository control; Strong terminal and tool execution model for engineering tasks | Setup is more technical than hosted IDE copilots; Model quality and latency depend on your provider choice |

Internal links

Related best pages

- Best Free LLMs for Solopreneurs

- Best Free AI Tools for Solopreneurs

- Best AI Automation Tools

- Best AI Email Marketing Tools