DeepSeek-R1 alternatives

Reasoning-focused open-weight family with MIT core licensing and smaller distilled options.

This DeepSeek-R1 alternatives guide compares pricing, strengths, tradeoffs, and related options.

DeepSeek-R1 is relevant for solopreneurs who want strong reasoning behavior in open-weight workflows. The flagship checkpoints are large, so practical use usually comes from smaller distills and careful license checks on inherited base models.

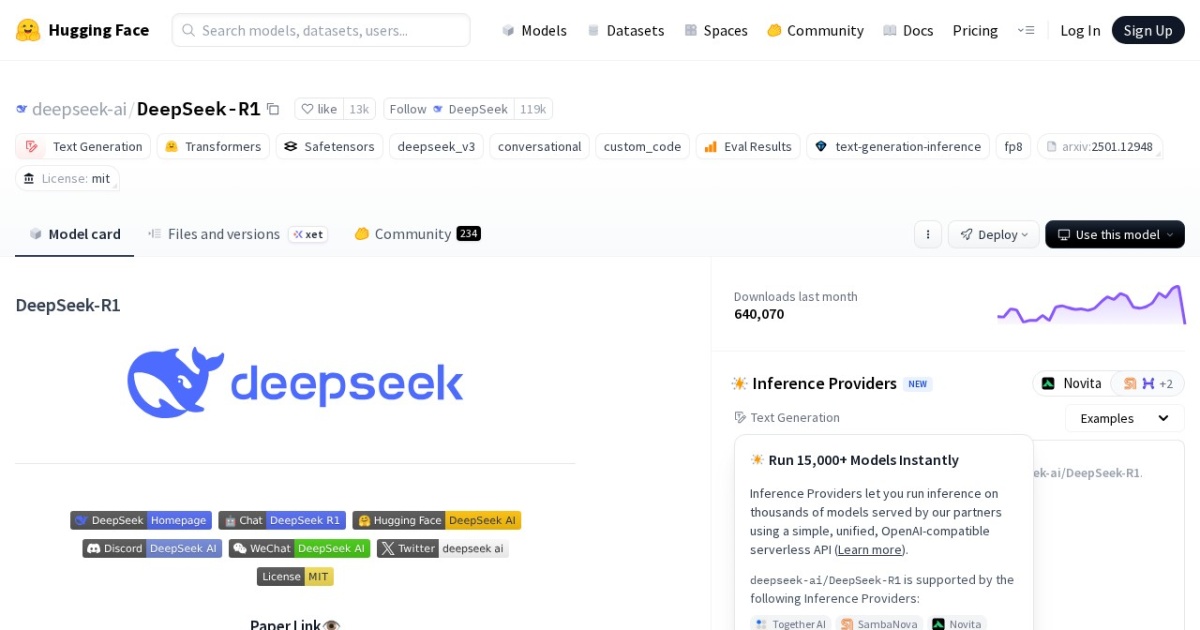

Official site: https://huggingface.co/deepseek-ai/DeepSeek-R1

Company YouTube: No official company YouTube channel found during official-page review.

At a glance

| Pricing model | Free |

|---|---|

| Page type | Model family |

| Model source | Own models |

| API cost | No required vendor API cost for local/self-hosted use. |

| Subscription cost | No mandatory subscription for base model access. |

| Model last update | 2025-03-27 (Hugging Face API lastModified). |

| Model weight counts | 1.5B, 7B, 8B, 14B, 32B, 70B, 671B total / 37B active |

| Best for | Reasoning-heavy workflows on distilled checkpoints, Local experimentation with open model pipelines, Teams that want OpenAI-style API integration patterns |

| Categories | For Solopreneurs , For Small Business , Free AI Tools , Automation , Developers , Local LLMs |

Top alternatives

- DeepSeek-V4 : Preview open-weight DeepSeek family with Pro and Flash MoE models, 1M context, and strong coding and agentic reasoning focus.

- NVIDIA Nemotron : Open model family for agentic AI with reasoning-focused releases across edge, single-GPU, and multi-GPU tiers.

- Qwen3 8B : Apache-2.0 open-weight 8B model with 128K context, local-first deployment, and optional cloud API access.

- gpt-oss-20b : Apache-2.0 open-weight text model with long context and practical local deployment targets.

- Ministral 3 8B : Apache-2.0 open-weight 8B model tuned for efficient local use with very long context.

Notes

DeepSeek-R1 is most useful when you treat model choice and license lineage as a paired decision.

Comparison table

| Tool | Pricing | Page type | Model source | API cost | Subscription cost | Pros | Cons |

|---|---|---|---|---|---|---|---|

| DeepSeek-R1 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | MIT core licensing is commercially friendly; Strong reasoning orientation for analytical tasks | Flagship model sizes are impractical for most solo local setups; Distill licensing can vary based on upstream model lineage |

| DeepSeek-V4 | Free | Model family | Own models | No required vendor API cost for self-hosted weights; hosted inference pricing varies by provider and model variant. | No mandatory subscription for open-weight access; hosted access is typically usage-based. | 1M-token context supports large document, repo, and agent traces; Pro and Flash variants make capability-versus-cost routing easier | Even Flash is too large for ordinary local machines; Preview releases can have immature runtime support and changing provider availability |

| NVIDIA Nemotron | Free | Model family | Own models | No required vendor API cost for local/self-hosted use; hosted NIM/provider endpoints are usage-based. | No mandatory subscription for base open-model access. | Strong focus on reasoning and agentic workloads; Open model access with broad deployment flexibility | Best performance often assumes modern NVIDIA hardware; Model naming and lineup evolve quickly, requiring active tracking |

| Qwen3 8B | Free | Model family | Own models | Local: no required vendor API cost. Optional cloud API (Alibaba Cloud Model Studio, pricing page updated 2026-02-11): qwen-max starts at $0.345 input / $1.377 output per 1M tokens; qwen-plus starts at $0.115 input / $0.287 output per 1M tokens (<=128K tier). | No fixed Qwen API subscription is listed in Model Studio; API billing is pay-as-you-go by token usage. | Apache-2.0 license supports broad commercial usage; 128K context is practical for multi-document tasks | Requires local deployment and model-ops basics; Text-only core model line |

| gpt-oss-20b | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Permissive Apache-2.0 license for commercial workflows; Long-context support suited to document-heavy tasks | Text-only model family; Requires self-hosting and operational monitoring |

| Ministral 3 8B | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Apache-2.0 licensing is low-friction for commercial projects; Very long context window for large document sets | Long-context runs can increase memory and latency requirements; Requires self-hosting and operations discipline |

Internal links

Related best pages

- Best Free LLMs for Solopreneurs

- Best Free AI Tools for Solopreneurs

- Best AI Automation Tools

- Best AI Email Marketing Tools