Phi-3 Mini alternatives

Lightweight Phi model family for fast local inference on modest hardware.

This Phi-3 Mini alternatives guide compares pricing, strengths, tradeoffs, and related options.

Phi-3 Mini is a practical option for solopreneurs who want responsive local inference with lower memory pressure.

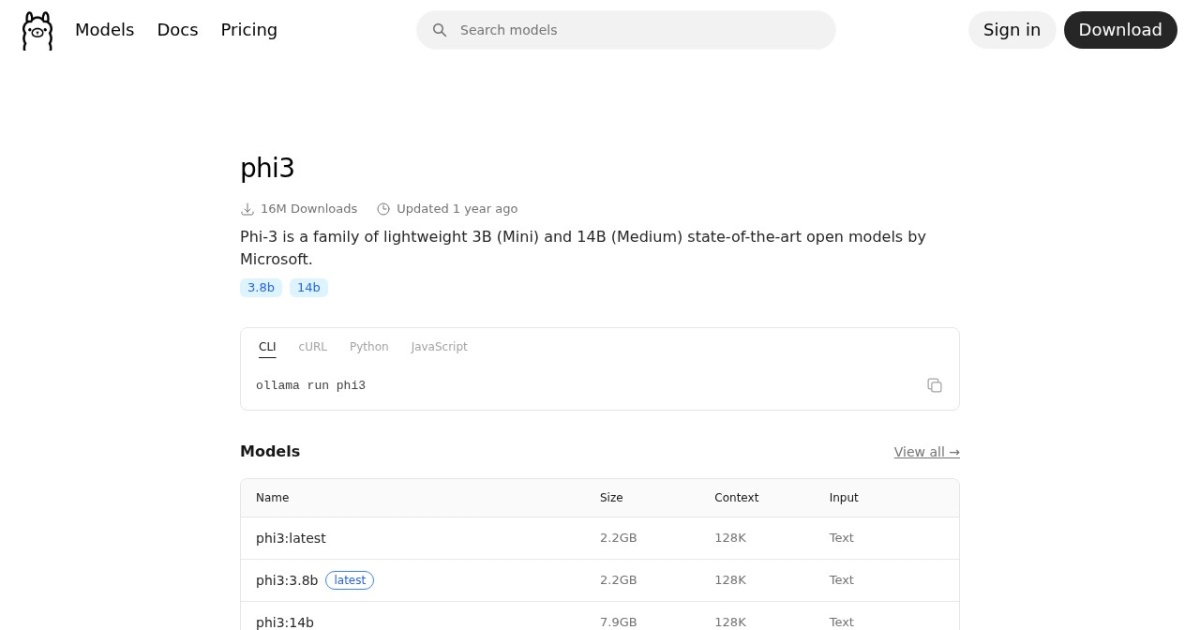

Official site: https://ollama.com/library/phi3

Company YouTube: No official company YouTube channel found during official-page review.

At a glance

| Pricing model | Free |

|---|---|

| Page type | Model family |

| Model source | Own models |

| API cost | No required vendor API cost for local/self-hosted use. |

| Subscription cost | No mandatory subscription for base model access. |

| Model last update | 2025-02-22 (Ollama library "Updated 1 year ago", inferred from retrieval date). |

| Model weight counts | 3.8B |

| Best for | Low-latency local chat and coding help, Entry-level local LLM deployments, Longer context experiments on limited hardware |

| Categories | For Solopreneurs , For Small Business , Free AI Tools , Developers , Local LLMs |

Top alternatives

- Phi-3.5 Mini Instruct : MIT-licensed small model with long context, optimized for practical local and on-device use.

- Qwen3 8B : Apache-2.0 open-weight 8B model with 128K context, local-first deployment, and optional cloud API access.

- Gemma 2 : Older Gemma family branch focused on efficient local text workloads in 2B, 9B, and 27B sizes.

Notes

Phi-3 Mini is a strong starting point for fast local inference with limited memory.

Comparison table

| Tool | Pricing | Page type | Model source | API cost | Subscription cost | Pros | Cons |

|---|---|---|---|---|---|---|---|

| Phi-3 Mini | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Fast on lower-end local hardware; Lower VRAM pressure than larger model families | Lower ceiling on complex reasoning tasks; Can underperform larger models on nuanced prompts |

| Phi-3.5 Mini Instruct | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | MIT licensing is simple for commercial use; Small footprint compared with larger local models | Weaker on complex reasoning than larger frontier models; Text-only variant for this checkpoint |

| Qwen3 8B | Free | Model family | Own models | Local: no required vendor API cost. Optional cloud API (Alibaba Cloud Model Studio, pricing page updated 2026-02-11): qwen-max starts at $0.345 input / $1.377 output per 1M tokens; qwen-plus starts at $0.115 input / $0.287 output per 1M tokens (<=128K tier). | No fixed Qwen API subscription is listed in Model Studio; API billing is pay-as-you-go by token usage. | Apache-2.0 license supports broad commercial usage; 128K context is practical for multi-document tasks | Requires local deployment and model-ops basics; Text-only core model line |

| Gemma 2 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Efficient performance for its model sizes; Useful for budget-conscious local inference | Newer Gemma branches are stronger for multimodal or longer-context tasks; Larger variants can still pressure limited VRAM |

Internal links

Related best pages

- Best Free LLMs for Solopreneurs

- Best Free AI Tools for Solopreneurs

- Best AI Automation Tools

- Best AI Email Marketing Tools