Qwen2.5 VL alternatives

Multimodal Qwen model family for local vision-language workflows.

This Qwen2.5 VL alternatives guide compares pricing, strengths, tradeoffs, and related options.

Qwen2.5 VL supports local multimodal tasks such as document parsing, screenshot analysis, and image-grounded assistant workflows.

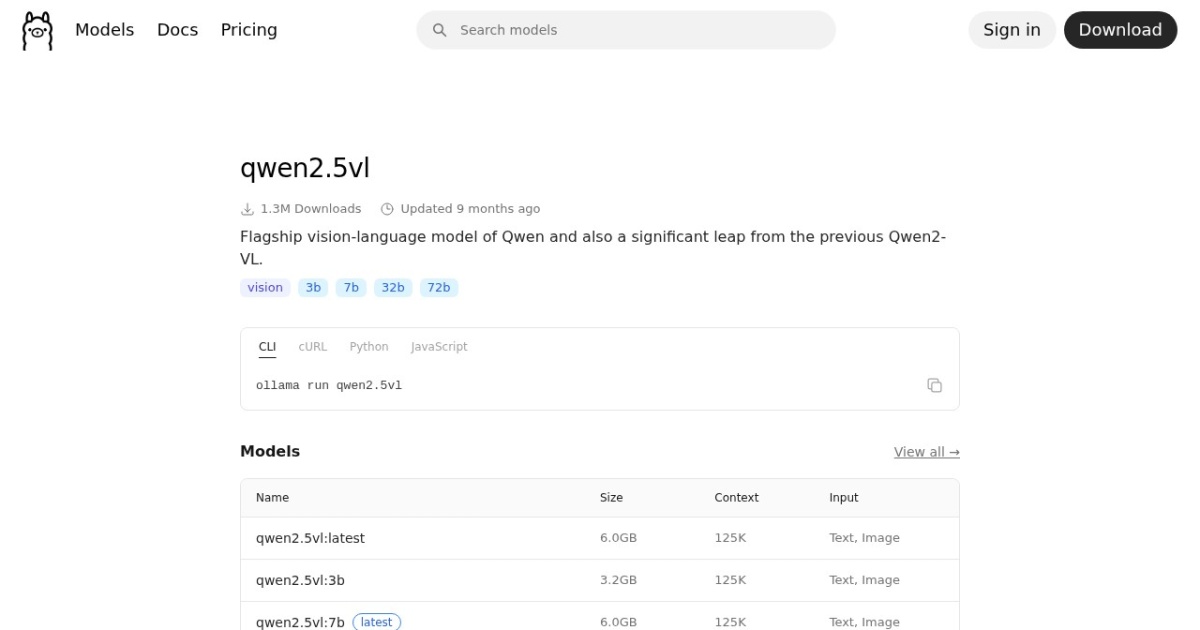

Official site: https://ollama.com/library/qwen2.5vl

Company YouTube: No official company YouTube channel found during official-page review.

At a glance

| Pricing model | Free |

|---|---|

| Page type | Model family |

| Model source | Own models |

| API cost | No required vendor API cost for local/self-hosted use. |

| Subscription cost | No mandatory subscription for base model access. |

| Model last update | 2025-05-22 (Ollama library "Updated 9 months ago", inferred from retrieval date). |

| Model weight counts | 3B, 7B, 72B |

| Model versions | Qwen2.5-VL release, Ollama library refresh |

| Best for | Multimodal local assistant workflows, Private visual document analysis, Builders experimenting with vision-language tasks |

| Categories | For Solopreneurs , For Small Business , Free AI Tools , Local LLMs , Vision LLMs |

Model version timeline

Top alternatives

- Qwen3.5 : Native multimodal Qwen family with sparse MoE scaling, strong agent behavior, and a flagship 397B total / 17B active open model.

- Mistral Small 4 : Open hybrid Mistral model that combines instruct, reasoning, coding, OCR, and transcription in one 256K-context family.

- Llama 3.2 Vision : Vision-capable Llama model for local image-plus-text understanding tasks.

- Phi-3.5 Vision Instruct : Compact MIT-licensed multimodal model for local image, OCR, chart, and multi-image reasoning tasks.

- MiniCPM-V 2.6 : Efficient local VLM with strong OCR, multi-image, and video understanding in an 8B-class footprint.

- InternVL 3.5 : Apache-2.0 multimodal family with many size options and a strong focus on reasoning, OCR, and agent-style visual tasks.

- DeepSeek-VL2 : Mixture-of-experts local vision-language family for OCR, documents, charts, and grounded multimodal reasoning.

- ChatGPT : Free cloud LLM for writing, research, and file-based analysis.

- Gemini : Free cloud LLM with published daily prompt limits and research-focused workflows.

Notes

Qwen2.5 VL is a strong local multimodal option for private image-and-text workflows.

Comparison table

| Tool | Pricing | Page type | Model source | API cost | Subscription cost | Pros | Cons |

|---|---|---|---|---|---|---|---|

| Qwen2.5 VL | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong local multimodal capability set; Useful for document and visual analysis workflows | Heavier runtime needs than text-only models; Requires careful context and memory tuning |

| Qwen3.5 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use; hosted Qwen3.5-Plus access is usage-based in Model Studio. | No mandatory subscription for open-weight access. | Native multimodal design is stronger than many stitched vision-plus-text stacks; Sparse MoE design keeps active parameters much lower than total scale | The flagship open model is still far heavier than commodity-laptop local models; Newer runtime support may lag behind more established Qwen branches |

| Mistral Small 4 | Free | Model family | Own models | Mistral API lists Mistral Small 4 at $0.15 input / $0.60 output per 1M tokens. | No mandatory subscription for open-weight access; hosted API is pay-as-you-go. | One family covers reasoning, coding, OCR, and transcription; 256K context is practical for large document and repo workflows | Still much heavier than 7B to 14B local models; Fresh releases can have uneven runtime support at first |

| Llama 3.2 Vision | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Adds local image understanding to text workflows; Good fit for multimodal assistant prototypes | Vision workloads can be heavier than text-only runs; Requires careful tuning for stable latency |

| Phi-3.5 Vision Instruct | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | MIT licensing is simple for commercial use; Strong fit for OCR, chart, and table understanding | Still needs careful VRAM tuning for heavier image batches; Weaker ceiling than larger frontier-scale VLMs |

| MiniCPM-V 2.6 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong OCR and document understanding for its size; Supports multi-image and video workflows | Weight license is less straightforward than MIT or Apache checkpoints; Setup is more technical than hosted VLM tools |

| InternVL 3.5 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Broad model-size ladder for different hardware budgets; Strong multimodal reasoning and OCR direction | Best checkpoints are heavier than small local VLMs; Setup and inference tuning can be demanding |

| DeepSeek-VL2 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong focus on OCR, tables, charts, and document tasks; Multiple size options improve deployment flexibility | Custom weight license is less simple than MIT or Apache model families; Local setup is heavier than browser-based assistants |

| ChatGPT | Freemium | Model family | Own models | OpenAI API (text): GPT-5.2 is $1.75 input / $14 output per 1M tokens; GPT-5.2 mini is $0.25 input / $2 output per 1M tokens. | ChatGPT Plus is $20/month; ChatGPT Pro is $200/month. | Broad free-tier capabilities for drafting, planning, and general analysis; Built-in web search plus file and image uploads | Usage caps are variable rather than a fixed public quota; Consumer content can be used for model improvement unless you opt out |

| Gemini | Freemium | Model family | Own models | Gemini API (2.5 Pro): $1.25 input / $10 output per 1M tokens for prompts <=200K tokens; $2.50 input / $15 output per 1M tokens for prompts >200K. | Google AI Pro (Gemini app) is $19.99/month; Google AI Ultra is $249.99/month (US pricing). | Published free-tier limit guidance helps planning; Good fit for research-heavy and structured planning workflows | Limits can change without fixed long-term guarantees; Privacy handling includes review pathways that may not fit sensitive work |

Internal links

Related best pages

- Best Free LLMs for Solopreneurs

- Best Free AI Tools for Solopreneurs

- Best AI Automation Tools

- Best AI Email Marketing Tools