MiniCPM-V 2.6 alternatives

Efficient local VLM with strong OCR, multi-image, and video understanding in an 8B-class footprint.

This MiniCPM-V 2.6 alternatives guide compares pricing, strengths, tradeoffs, and related options.

MiniCPM-V 2.6 is one of the strongest practical local vision models for OCR-heavy and document-heavy tasks. It is notable for high image resolution support, efficient token usage, and workable local deployment paths through Ollama, llama.cpp, quantization, and vLLM.

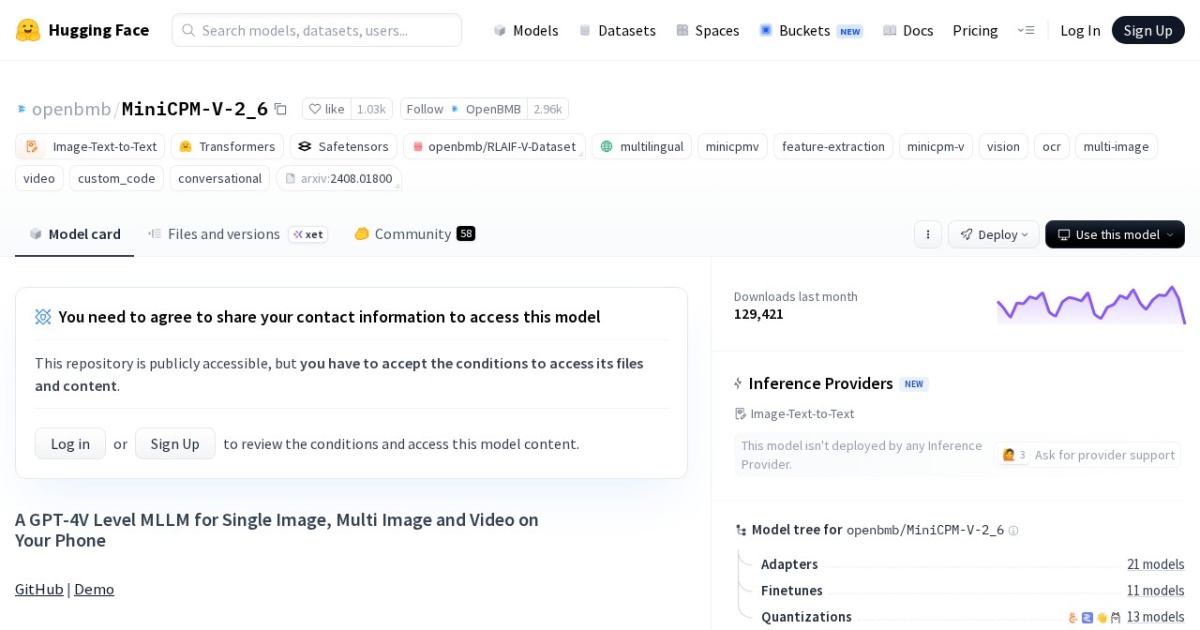

Official site: https://huggingface.co/openbmb/MiniCPM-V-2_6

Company YouTube: No official company YouTube channel found during official-page review.

At a glance

| Pricing model | Free |

|---|---|

| Page type | Model family |

| Model source | Own models |

| API cost | No required vendor API cost for local/self-hosted use. |

| Subscription cost | No mandatory subscription for base model access. |

| Model last update | 2024-08-03 (MiniCPM-V paper publication and Hugging Face release period). |

| Model weight counts | 8B |

| Model versions | MiniCPM-V 2.6, MiniCPM-o 2.6 announced |

| Best for | Private visual document analysis, Multimodal local assistant workflows, Privacy-sensitive visual assistant tasks |

| Categories | For Solopreneurs , For Small Business , Free AI Tools , Developers , Local LLMs , Vision LLMs |

Model version timeline

Top alternatives

- Qwen2.5 VL : Multimodal Qwen model family for local vision-language workflows.

- Phi-3.5 Vision Instruct : Compact MIT-licensed multimodal model for local image, OCR, chart, and multi-image reasoning tasks.

- InternVL 3.5 : Apache-2.0 multimodal family with many size options and a strong focus on reasoning, OCR, and agent-style visual tasks.

- DeepSeek-VL2 : Mixture-of-experts local vision-language family for OCR, documents, charts, and grounded multimodal reasoning.

Notes

MiniCPM-V 2.6 is especially relevant if your local vision workloads center on OCR, screenshots, PDFs, and complex multi-image comparisons.

Comparison table

| Tool | Pricing | Page type | Model source | API cost | Subscription cost | Pros | Cons |

|---|---|---|---|---|---|---|---|

| MiniCPM-V 2.6 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong OCR and document understanding for its size; Supports multi-image and video workflows | Weight license is less straightforward than MIT or Apache checkpoints; Setup is more technical than hosted VLM tools |

| Qwen2.5 VL | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong local multimodal capability set; Useful for document and visual analysis workflows | Heavier runtime needs than text-only models; Requires careful context and memory tuning |

| Phi-3.5 Vision Instruct | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | MIT licensing is simple for commercial use; Strong fit for OCR, chart, and table understanding | Still needs careful VRAM tuning for heavier image batches; Weaker ceiling than larger frontier-scale VLMs |

| InternVL 3.5 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Broad model-size ladder for different hardware budgets; Strong multimodal reasoning and OCR direction | Best checkpoints are heavier than small local VLMs; Setup and inference tuning can be demanding |

| DeepSeek-VL2 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong focus on OCR, tables, charts, and document tasks; Multiple size options improve deployment flexibility | Custom weight license is less simple than MIT or Apache model families; Local setup is heavier than browser-based assistants |

Internal links

Related best pages

- Best Free LLMs for Solopreneurs

- Best Free AI Tools for Solopreneurs

- Best AI Automation Tools

- Best AI Email Marketing Tools