Kimi K alternatives

Earlier open-weight Kimi branch for long-context reasoning and local LLM experimentation.

This Kimi K alternatives guide compares pricing, strengths, tradeoffs, and related options.

Kimi K refers here to the older K2 open-weight branch, before Moonshot expanded the line into the newer Kimi K2.6 multimodal and more deeply agentic release.

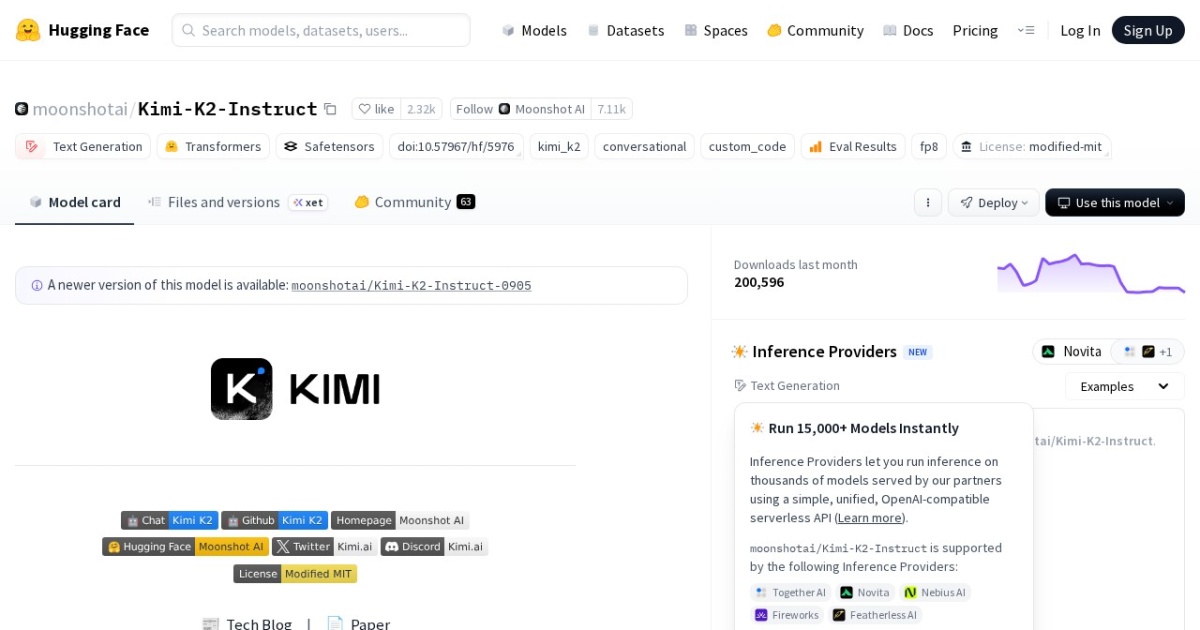

Official site: https://huggingface.co/moonshotai/Kimi-K2-Instruct

Company YouTube: No official company YouTube channel found during official-page review.

At a glance

| Pricing model | Free |

|---|---|

| Page type | Model family |

| Model source | Own models |

| API cost | No required vendor API cost for local/self-hosted use. |

| Subscription cost | No mandatory subscription for base model access. |

| Model last update | 2026-01-30 (Hugging Face API lastModified). |

| Model weight counts | 1T total / 32B active |

| Related model | Kimi K2.6 · Kimi K vs Kimi K2.6 |

| Key difference | Kimi K is the older text-first K2 branch; Kimi K2.6 is newer, multimodal, and much more focused on long-horizon coding and agent workflows. |

| Best for | Local long-context drafting and analysis, Builders comparing open-weight LLM stacks, Privacy-sensitive solopreneur research workflows |

| Categories | For Solopreneurs , For Small Business , Free AI Tools , Local LLMs |

Top alternatives

- Kimi K2.6 : Latest open-weight Kimi model for long-horizon coding, agent swarms, multimodal execution, and large-context local experimentation.

- Qwen3 8B : Apache-2.0 open-weight 8B model with 128K context, local-first deployment, and optional cloud API access.

- GLM-4.5 Air : Open-weight GLM model variant for local reasoning, coding, and automation workflows.

- DeepSeek-R1 : Reasoning-focused open-weight family with MIT core licensing and smaller distilled options.

Notes

Kimi K is now mainly useful as a reference point for the older K2 branch; for the current Kimi release on the site, use Kimi K2.6.

Comparison table

| Tool | Pricing | Page type | Model source | API cost | Subscription cost | Pros | Cons |

|---|---|---|---|---|---|---|---|

| Kimi K | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Good fit for private long-context local workflows; Open-weight path enables deeper customization | Requires technical setup for serving and monitoring; Quality varies by deployment tuning and prompt discipline |

| Kimi K2.6 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use; Moonshot also offers hosted API access if you prefer managed deployment. | No mandatory subscription for base model access. | Stronger long-horizon coding and agentic execution than the older Kimi K branch; Native multimodal support for screenshots, UI work, and visually grounded tasks | Very large model family with demanding deployment requirements; Commercial use still needs license and policy review |

| Qwen3 8B | Free | Model family | Own models | Local: no required vendor API cost. Optional cloud API (Alibaba Cloud Model Studio, pricing page updated 2026-02-11): qwen-max starts at $0.345 input / $1.377 output per 1M tokens; qwen-plus starts at $0.115 input / $0.287 output per 1M tokens (<=128K tier). | No fixed Qwen API subscription is listed in Model Studio; API billing is pay-as-you-go by token usage. | Apache-2.0 license supports broad commercial usage; 128K context is practical for multi-document tasks | Requires local deployment and model-ops basics; Text-only core model line |

| GLM-4.5 Air | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong fit for local-first and private LLM workflows; Useful balance of capability and deployment practicality | Requires local serving and model operations setup; Output quality depends on prompt design and QA discipline |

| DeepSeek-R1 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | MIT core licensing is commercially friendly; Strong reasoning orientation for analytical tasks | Flagship model sizes are impractical for most solo local setups; Distill licensing can vary based on upstream model lineage |

Internal links

Related best pages

- Best Free LLMs for Solopreneurs

- Best Free AI Tools for Solopreneurs

- Best AI Automation Tools

- Best AI Email Marketing Tools