Gemma 2 alternatives

Older Gemma family branch focused on efficient local text workloads in 2B, 9B, and 27B sizes.

This Gemma 2 alternatives guide compares pricing, strengths, tradeoffs, and related options.

Gemma 2 remains a practical local text model family when you want solid quality in smaller footprints, but it is now clearly the older branch in the Gemma line. Gemma 3 added multimodal support and longer context, while newer Gemma 3n and Gemma 4 branches push the family further toward on-device multimodal use.

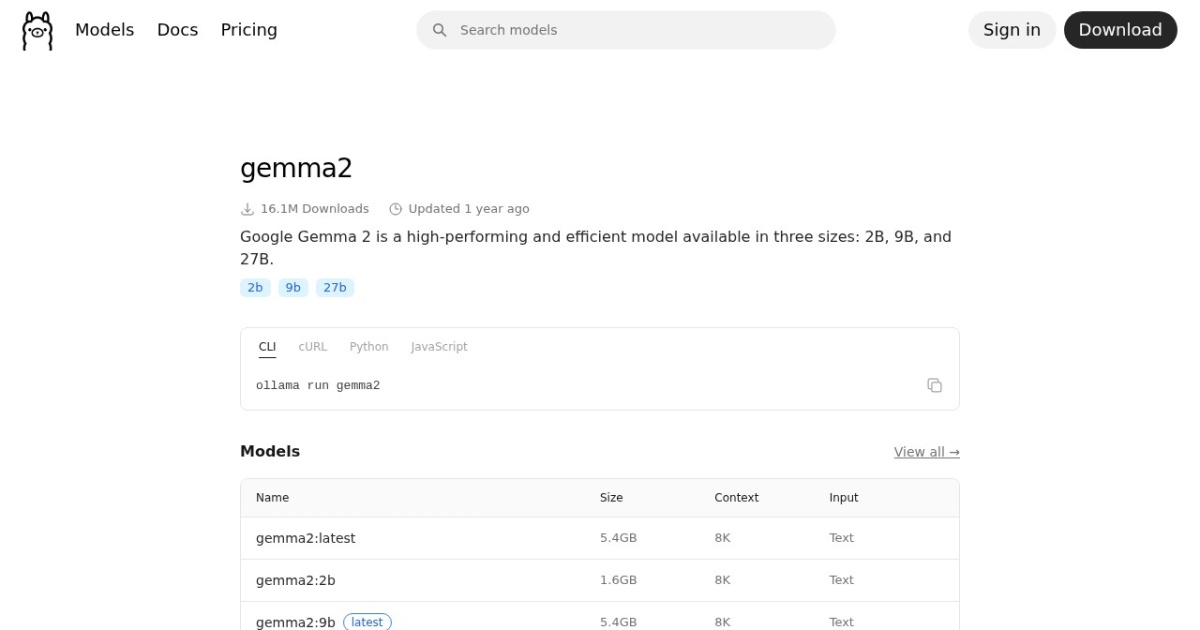

Official site: https://ollama.com/library/gemma2

Company YouTube: No official company YouTube channel found during official-page review.

At a glance

| Pricing model | Free |

|---|---|

| Page type | Model family |

| Model source | Own models |

| API cost | No required vendor API cost for local/self-hosted use. |

| Subscription cost | No mandatory subscription for base model access. |

| Model last update | 2024-10-02 (Google Gemma releases list: Gemma 2 JPN and Gemma Scope update). |

| Model weight counts | 2B, 9B, 27B |

| Model versions | Gemma 2 launch, Gemma 2 2B, Gemma 2 JPN and Gemma Scope, Gemma 3 announced |

| Related model | Gemma 3 · Gemma 2 vs Gemma 3 |

| Key difference | Gemma 2 is an older text-first branch; Gemma 3 adds multimodal support, larger context, and a broader current ecosystem. |

| Best for | Efficient local chat workloads, Summarization and long-form drafting, Solopreneurs optimizing for memory efficiency |

| Categories | For Solopreneurs , For Small Business , Free AI Tools , Developers , Local LLMs |

Model version timeline

A smaller 2B Gemma 2 checkpoint expanded the family for lighter local deployments.

Source

Google added a Japanese Gemma 2 branch and Gemma Scope tooling around the family.

Source

Gemma 3 introduced multimodal support and made Gemma 2 the older generation for most new evaluations.

Source

Top alternatives

- Gemma 3 : Multimodal Gemma family with 128K context and broad local deployment options under Gemma terms.

- Llama 3.1 : Open model family often used as a balanced local default for general chat, writing, and coding.

- Qwen2.5 : Versatile multilingual open model family with strong long-form writing and instruction-following behavior.

- Qwen3 8B : Apache-2.0 open-weight 8B model with 128K context, local-first deployment, and optional cloud API access.

- Phi-3.5 Mini Instruct : MIT-licensed small model with long context, optimized for practical local and on-device use.

Notes

Gemma 2 is still useful on tighter hardware, but it should now be evaluated as the older Gemma branch rather than the default current choice.

Comparison table

| Tool | Pricing | Page type | Model source | API cost | Subscription cost | Pros | Cons |

|---|---|---|---|---|---|---|---|

| Gemma 2 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Efficient performance for its model sizes; Useful for budget-conscious local inference | Newer Gemma branches are stronger for multimodal or longer-context tasks; Larger variants can still pressure limited VRAM |

| Gemma 3 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Multiple model sizes support broad hardware profiles; Long-context support for substantial document tasks | No longer the newest Gemma branch for fresh evaluations; Custom license terms increase compliance workload |

| Llama 3.1 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong quality-to-size balance for local usage; Works well across general assistant tasks | Larger variants need substantial VRAM; Output quality still varies by quant and prompt quality |

| Qwen2.5 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong multilingual quality across tasks; Scales from smaller to larger local deployments | Larger sizes need significant VRAM headroom; Runtime context still requires careful tuning |

| Qwen3 8B | Free | Model family | Own models | Local: no required vendor API cost. Optional cloud API (Alibaba Cloud Model Studio, pricing page updated 2026-02-11): qwen-max starts at $0.345 input / $1.377 output per 1M tokens; qwen-plus starts at $0.115 input / $0.287 output per 1M tokens (<=128K tier). | No fixed Qwen API subscription is listed in Model Studio; API billing is pay-as-you-go by token usage. | Apache-2.0 license supports broad commercial usage; 128K context is practical for multi-document tasks | Requires local deployment and model-ops basics; Text-only core model line |

| Phi-3.5 Mini Instruct | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | MIT licensing is simple for commercial use; Small footprint compared with larger local models | Weaker on complex reasoning than larger frontier models; Text-only variant for this checkpoint |

Internal links

Related best pages

- Best Free LLMs for Solopreneurs

- Best Free AI Tools for Solopreneurs

- Best AI Automation Tools

- Best AI Email Marketing Tools