Mistral NeMo alternatives

Mid-size model line that balances general reasoning, coding support, and local deployability.

This Mistral NeMo alternatives guide compares pricing, strengths, tradeoffs, and related options.

Mistral NeMo is a useful middle-ground model choice when you need stronger quality than small models without jumping to very large VRAM demands.

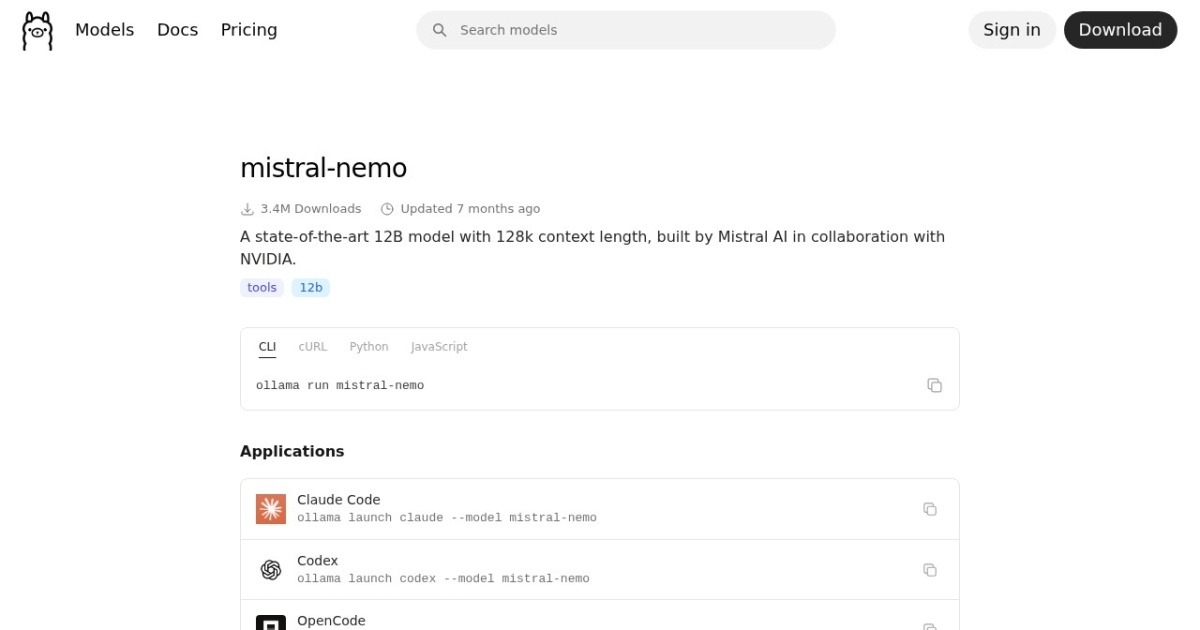

Official site: https://ollama.com/library/mistral-nemo

Company YouTube: No official company YouTube channel found during official-page review.

At a glance

| Pricing model | Free |

|---|---|

| Page type | Model family |

| Model source | Own models |

| API cost | No required vendor API cost for local/self-hosted use. |

| Subscription cost | No mandatory subscription for base model access. |

| Model last update | 2025-07-22 (Ollama library "Updated 7 months ago", inferred from retrieval date). |

| Model weight counts | 12B |

| Best for | Balanced local assistant workloads, Coding and reasoning mixed tasks, Mid-tier self-hosted LLM stacks |

| Categories | For Solopreneurs , For Small Business , Free AI Tools , Developers , Local LLMs |

Top alternatives

- Qwen2.5 : Versatile multilingual open model family with strong long-form writing and instruction-following behavior.

- Llama 3.1 : Open model family often used as a balanced local default for general chat, writing, and coding.

- Gemma 2 : Older Gemma family branch focused on efficient local text workloads in 2B, 9B, and 27B sizes.

Notes

Mistral NeMo is a practical mid-size local model choice for mixed assistant and coding workloads.

Comparison table

| Tool | Pricing | Page type | Model source | API cost | Subscription cost | Pros | Cons |

|---|---|---|---|---|---|---|---|

| Mistral NeMo | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Balanced quality for mixed chat and coding tasks; Good step-up option from smaller model families | Heavier than 7B-class models for low-end setups; Context tuning still required for stable throughput |

| Qwen2.5 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong multilingual quality across tasks; Scales from smaller to larger local deployments | Larger sizes need significant VRAM headroom; Runtime context still requires careful tuning |

| Llama 3.1 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong quality-to-size balance for local usage; Works well across general assistant tasks | Larger variants need substantial VRAM; Output quality still varies by quant and prompt quality |

| Gemma 2 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Efficient performance for its model sizes; Useful for budget-conscious local inference | Newer Gemma branches are stronger for multimodal or longer-context tasks; Larger variants can still pressure limited VRAM |

Internal links

Related best pages

- Best Free LLMs for Solopreneurs

- Best Free AI Tools for Solopreneurs

- Best AI Automation Tools

- Best AI Email Marketing Tools