Llama 4 alternatives

Open-weight multimodal family with massive context, but significant policy and license constraints.

This Llama 4 alternatives guide compares pricing, strengths, tradeoffs, and related options.

Llama 4 offers headline-grabbing context scale and multimodal capabilities, but it is not a permissive open-source license profile. Solopreneurs should treat it as a high-power option that comes with compliance review and higher infrastructure expectations.

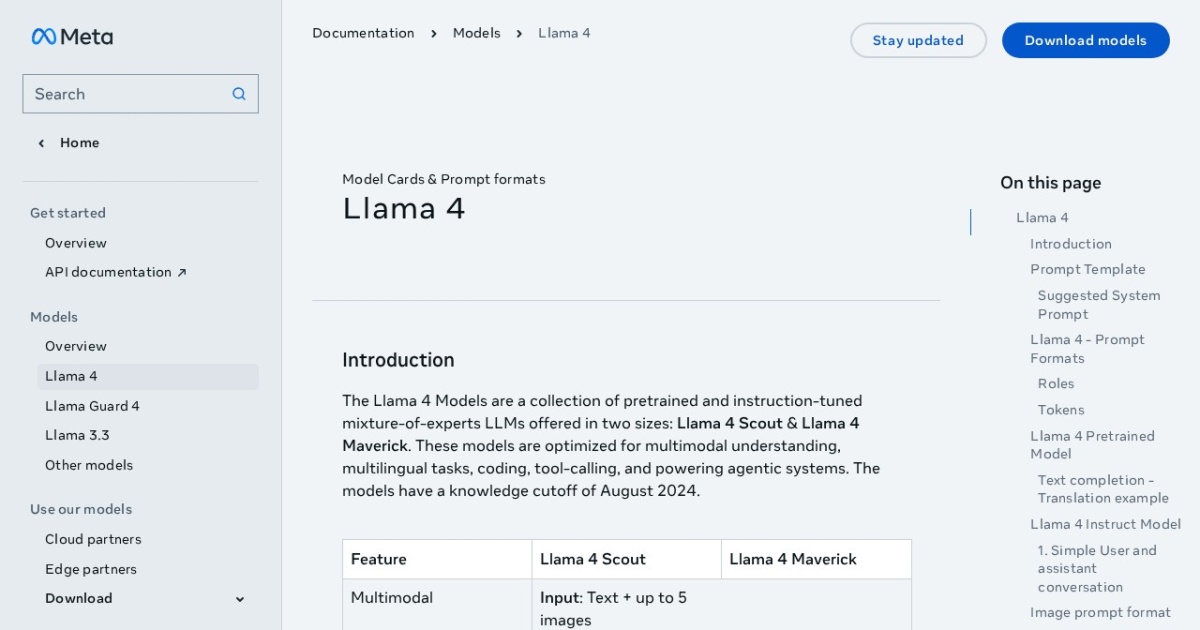

Official site: https://www.llama.com/docs/model-cards-and-prompt-formats/llama4/

Company YouTube: No official company YouTube channel found during official-page review.

At a glance

| Pricing model | Free |

|---|---|

| Page type | Model family |

| Model source | Own models |

| API cost | No required vendor API cost for local/self-hosted use. |

| Subscription cost | No mandatory subscription for base model access. |

| Model last update | 2025-04-05 (Meta "Introducing Llama 4" announcement). |

| Model weight counts | 109B total / 17B active, 400B total / 17B active, 2T total / 288B active |

| Best for | Large multi-document summarization pipelines, Multimodal internal analysis workflows, Teams that can manage license and compliance overhead |

| Categories | For Solopreneurs , For Small Business , Free AI Tools , Local LLMs , Vision LLMs |

Top alternatives

- Qwen3.6-35B-A3B : First open-weight Qwen3.6 model: a 35B total / 3B active multimodal MoE focused on agentic coding and practical local use.

- NVIDIA Nemotron : Open model family for agentic AI with reasoning-focused releases across edge, single-GPU, and multi-GPU tiers.

- Gemma 4 : Newest Gemma family with Apache-2.0 licensing, multimodal input, 256K context, and sparse on-device variants.

- Qwen3 8B : Apache-2.0 open-weight 8B model with 128K context, local-first deployment, and optional cloud API access.

- DeepSeek-R1 : Reasoning-focused open-weight family with MIT core licensing and smaller distilled options.

Notes

Llama 4 can be powerful, but it is usually a compliance-and-infrastructure decision before it is a model-quality decision.

Comparison table

| Tool | Pricing | Page type | Model source | API cost | Subscription cost | Pros | Cons |

|---|---|---|---|---|---|---|---|

| Llama 4 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Very large context windows for repository- and corpus-level tasks; Multimodal support for text and image understanding | License includes attribution and derivative naming obligations; Additional licensing conditions can trigger at very large scale |

| Qwen3.6-35B-A3B | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Much more practical than waiting for very large Qwen3.6 weights; Strong agentic coding uplift over the previous 35B-A3B branch | Still needs meaningful hardware compared with 8B-class local models; Hosted Qwen3.6-Plus remains the stronger top-end option if you can accept API dependence |

| NVIDIA Nemotron | Free | Model family | Own models | No required vendor API cost for local/self-hosted use; hosted NIM/provider endpoints are usage-based. | No mandatory subscription for base open-model access. | Strong focus on reasoning and agentic workloads; Open model access with broad deployment flexibility | Best performance often assumes modern NVIDIA hardware; Model naming and lineup evolve quickly, requiring active tracking |

| Gemma 4 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Apache-2.0 licensing is simpler for commercial use than earlier Gemma branches; 256K context is strong for larger document and app workflows | 31B still needs serious local hardware compared with smaller VLM options; Fresh releases can have uneven runtime support at first |

| Qwen3 8B | Free | Model family | Own models | Local: no required vendor API cost. Optional cloud API (Alibaba Cloud Model Studio, pricing page updated 2026-02-11): qwen-max starts at $0.345 input / $1.377 output per 1M tokens; qwen-plus starts at $0.115 input / $0.287 output per 1M tokens (<=128K tier). | No fixed Qwen API subscription is listed in Model Studio; API billing is pay-as-you-go by token usage. | Apache-2.0 license supports broad commercial usage; 128K context is practical for multi-document tasks | Requires local deployment and model-ops basics; Text-only core model line |

| DeepSeek-R1 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | MIT core licensing is commercially friendly; Strong reasoning orientation for analytical tasks | Flagship model sizes are impractical for most solo local setups; Distill licensing can vary based on upstream model lineage |

Internal links

Related best pages

- Best Free LLMs for Solopreneurs

- Best Free AI Tools for Solopreneurs

- Best AI Automation Tools

- Best AI Email Marketing Tools