Mixtral 8x22B alternatives

Mixture-of-experts model family offering strong quality with favorable active-parameter efficiency.

This Mixtral 8x22B alternatives guide compares pricing, strengths, tradeoffs, and related options.

Mixtral 8x22B is a strong option for high-end local inference when you want large-model quality and efficient expert routing behavior.

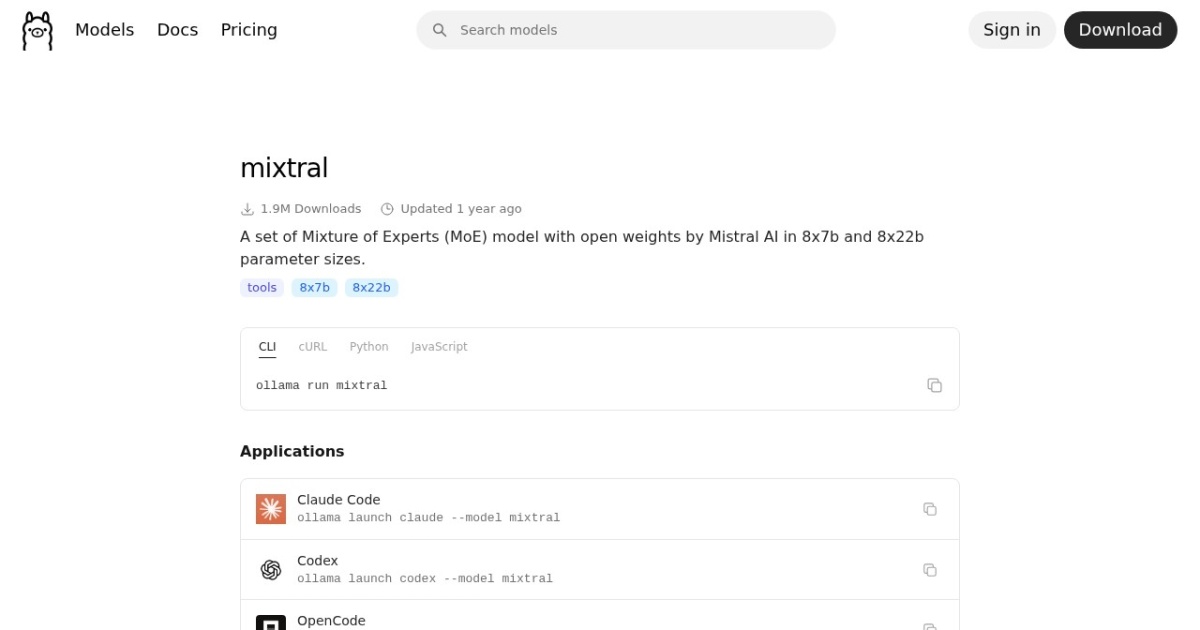

Official site: https://ollama.com/library/mixtral

Company YouTube: No official company YouTube channel found during official-page review.

At a glance

| Pricing model | Free |

|---|---|

| Page type | Model family |

| Model source | Own models |

| API cost | No required vendor API cost for local/self-hosted use. |

| Subscription cost | No mandatory subscription for base model access. |

| Model last update | 2025-02-22 (Ollama library "Updated 1 year ago", inferred from retrieval date). |

| Model weight counts | 141B total / 39B active |

| Best for | High-end local inference setups, Long-context reasoning workflows, Users comparing large-model quality tiers |

| Categories | For Solopreneurs , For Small Business , Free AI Tools , Local LLMs |

Top alternatives

- Llama 3.3 : Larger Llama generation aimed at high-quality local reasoning and assistant workflows.

- Command R+ : Large instruction-tuned model oriented to advanced assistant and retrieval-heavy workflows.

- Qwen2.5 : Versatile multilingual open model family with strong long-form writing and instruction-following behavior.

Notes

Mixtral 8x22B is a high-end local choice for users who want strong quality and can support heavier runtime demands.

Comparison table

| Tool | Pricing | Page type | Model source | API cost | Subscription cost | Pros | Cons |

|---|---|---|---|---|---|---|---|

| Mixtral 8x22B | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong quality for advanced local tasks; MoE design can improve quality-per-compute behavior | Complex model behavior and heavier deployment demands; Requires high VRAM headroom for stable operation |

| Llama 3.3 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong quality for large-model local inference; Good fit for advanced reasoning and writing tasks | Demands high-end hardware for smooth performance; Can spill quickly at oversized contexts |

| Command R+ | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong instruction-following on complex prompts; Useful for retrieval-heavy and structured workflows | High hardware requirements for practical speed; Can require aggressive context tuning to avoid spill |

| Qwen2.5 | Free | Model family | Own models | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Strong multilingual quality across tasks; Scales from smaller to larger local deployments | Larger sizes need significant VRAM headroom; Runtime context still requires careful tuning |

Internal links

Related best pages

- Best Free LLMs for Solopreneurs

- Best Free AI Tools for Solopreneurs

- Best AI Automation Tools

- Best AI Email Marketing Tools