LangSmith alternatives

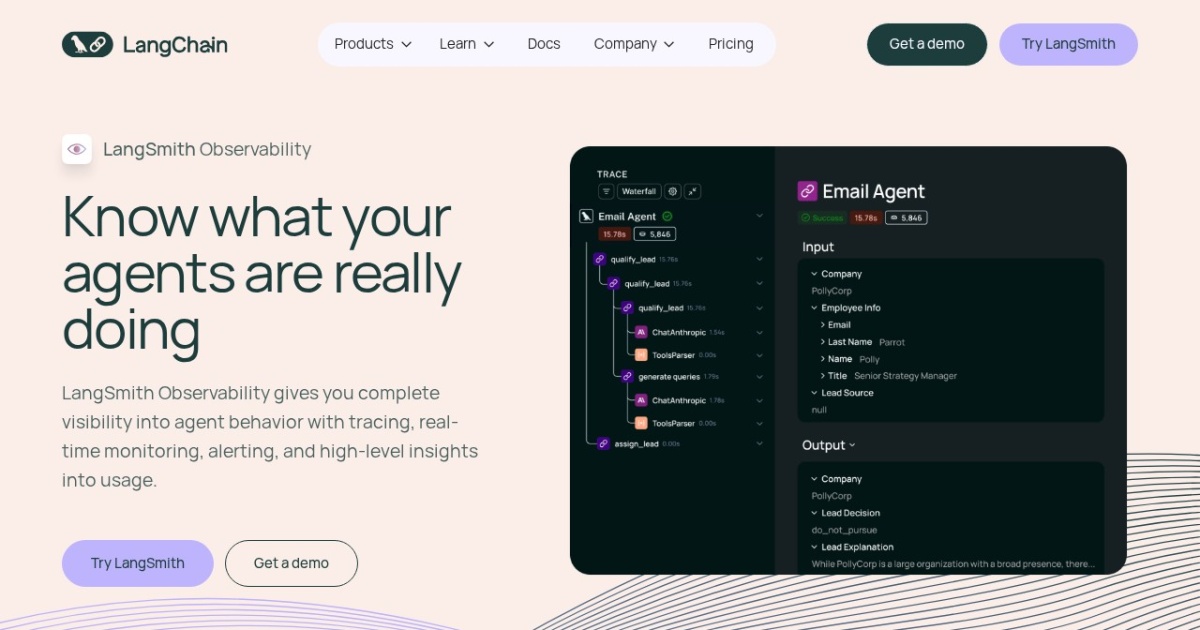

Agent observability and evaluation platform for tracing, debugging, and improving LLM workflows.

This LangSmith alternatives guide compares pricing, strengths, tradeoffs, and related options.

LangSmith fits teams that need production visibility into agent runs, tool calls, evaluation metrics, and regression tracking before scaling usage.

Official site: https://www.langchain.com/langsmith

Company YouTube: https://www.youtube.com/@LangChain

At a glance

| Pricing model | Freemium |

|---|---|

| Page type | Product/service |

| Model source | 3rd-party models |

| Price range | Free-$100+/mo |

| Model last update | 2026-03-19 (official LangChain changelog: Agent Builder is now LangSmith Fleet). |

| Best for | Agent quality monitoring and regression prevention, Teams running production-like LLM workflows |

| Categories | For Solopreneurs , For Small Business , Free AI Tools , Automation , Developers |

Top alternatives

- Langfuse : Open-source LLM observability platform for traces, evaluations, prompts, and production monitoring.

- Helicone : LLM observability layer with request logging, analytics, and cost tracking across model providers.

- Arize Phoenix : Open-source LLM tracing and evaluation toolkit for debugging, experimentation, and quality analysis.

Notes

LangSmith is most valuable when you treat observability and evaluation as mandatory parts of the agent stack.

Comparison table

| Tool | Pricing | Page type | Model source | Price range | Pros | Cons |

|---|---|---|---|---|---|---|

| LangSmith | Freemium | Product/service | 3rd-party models | Free-$100+/mo | Strong tracing and debugging for multi-step agent runs; Supports evaluation workflows and monitoring over time | Added platform cost on top of model and tool spend; Setup requires disciplined instrumentation practices |

| Langfuse | Freemium | Open-source project | 3rd-party models | Free self-hosted; paid cloud tiers available | Open-source with self-hosting option; Strong trace visibility for multi-step LLM and agent runs | Setup and operations are your responsibility when self-hosted; Team processes are required to keep traces and evals useful |

| Helicone | Freemium | Product/service | 3rd-party models | Free tier + paid plans | Quick way to add model usage analytics and cost visibility; Works across multiple LLM providers | Less focused on deep eval workflows than full eval platforms; Advanced use cases may still need custom instrumentation |

| Arize Phoenix | Free | Open-source project | 3rd-party models | Free (open-source) | Open-source with strong debugging depth; Useful for eval experimentation and quality analysis | Requires technical setup and maintenance; UI/UX may feel less turnkey than managed SaaS options |

Internal links

Related best pages

- Best Free LLMs for Solopreneurs

- Best Free AI Tools for Solopreneurs

- Best AI Automation Tools

- Best AI Email Marketing Tools