Baseten alternatives

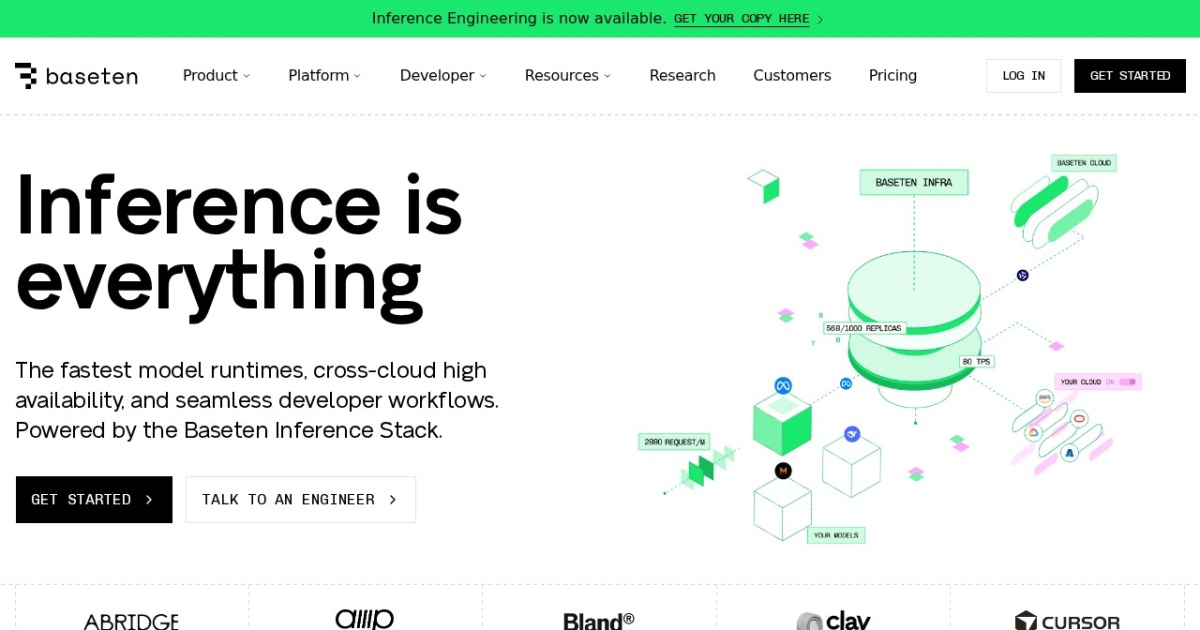

Model deployment platform for serving ML and LLM workloads with production APIs.

This Baseten alternatives guide compares pricing, strengths, tradeoffs, and related options.

Baseten is included in this directory because it supports repeatable creator and solopreneur workflows at MVP scale.

Official site: https://www.baseten.co/

Company YouTube: https://www.youtube.com/channel/UCOCLmqf7Jy3LcsO0SMBGP_Q

At a glance

| Pricing model | Subscription |

|---|---|

| Page type | Product/service |

| Model source | 3rd-party models |

| Price range | See official pricing |

| Best for | Teams running production-like LLM workflows, Self-managed app and API deployments |

| Categories | Developers |

Top alternatives

Notes

Baseten is useful for teams that need managed model serving with production-style API deployment.

Comparison table

| Tool | Pricing | Page type | Model source | Price range | API cost | Subscription cost | Pros | Cons |

|---|---|---|---|---|---|---|---|---|

| Baseten | Subscription | Product/service | 3rd-party models | See official pricing | No separate public API pricing is listed; access appears tied to the provider's plans or hosted usage. | Subscription cost follows the listed plan range above. | Fast setup for solo teams; Useful template support for repeatable workflows | Costs can increase with higher usage; Output quality depends on prompt quality |

| n8n | Subscription | Product/service | 3rd-party models | Free-$200+/mo | No separate public API pricing is listed; access appears tied to the provider's plans or hosted usage. | Subscription cost follows the listed plan range above. | Fast setup for solo teams; Useful template support for repeatable workflows | Costs can increase with higher usage; Output quality depends on prompt quality |

| Make | Freemium | Product/service | 3rd-party models | Free-$34+/mo | No separate public API pricing is listed; access appears tied to the provider's plans or hosted usage. | Subscription cost follows the listed plan range above. | Fast setup for solo teams; Useful template support for repeatable workflows | Costs can increase with higher usage; Output quality depends on prompt quality |

| Ollama | Free | Open-source project | 3rd-party models | Free (open-source) | No required vendor API cost for local/self-hosted use. | No mandatory subscription for base model access. | Fast local setup for private model workflows; Easy model pull, run, and API access patterns | Performance depends heavily on your hardware; Large models still require careful memory planning |